The ExtremeEarth

project

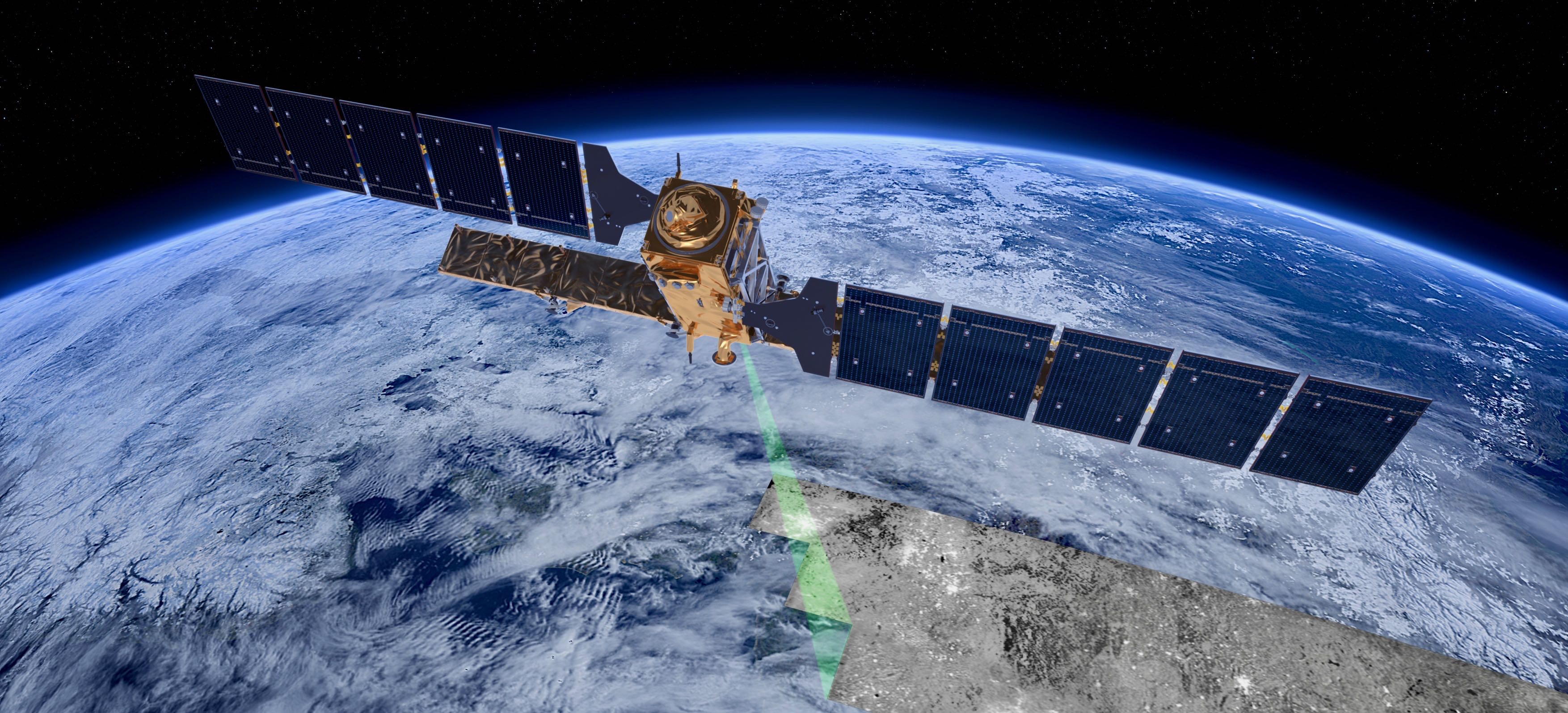

The data and information processed and disseminated puts Copernicus at the forefront of the big data paradigm, giving rise to all relevant challenges, the so-called 5 Vs: volume, velocity, variety, veracity and value. Two important activities related to Copernicus are the thematic exploitation platforms (TEPs) and the Data and Information Access Services (DIAS).

Although the TEPs and DIAS have been welcomed by the EO data user community, they both have a disadvantage: they target users that are experts in EO data and technologies, and ignore the myriad of software developers that might not be experts in EO but still have a lot to gain by integrating EO data in their applications. Therefore, opening up the TEPs and DIASs by extracting information and knowledge hidden in the data, publishing this information and knowledge using linked data technologies, and interlinking it with data in other TEPs and DIASs and other non-EO data, information and knowledge can be an important way of making the development of downstream applications easy for both EO and non-EO experts.

Contrary to multimedia images, for which highly scalable Artificial Intelligence techniques based on deep neural network architectures have been developed by big North American companies such as Google and Facebook recently, similar architectures for satellite images, that can manage the extreme scale and characteristics of Copernicus data, do not exist today. The deep neural network architectures can classify effectively and efficiently multimedia images because they have been trained using extremely large benchmark datasets consisting of millions of images (e.g., ImageNet) and have utilized the power of big data, cloud and GPU technologies. Training datasets consisting of millions of data samples in the Copernicus context do not exist today and published deep learning architectures for Copernicus satellite images typically run using one GPU and do not take advantage of recent advances like distributed scale-out deep learning.

The main objective of ExtremeEarth is to go beyond the four projects mentioned above by developing extreme Earth analytics techniques and technologies that scale to the PBs of big Copernicus data, information and knowledge, and applying these technologies in two of the ESA TEPs: Food Security and Polar.

The developed technologies extended the HOPS data platform to offer unprecedented scalability to extreme data volumes and scale-out distributed deep learning for Copernicus data. The extended HOPS data platform runs on CREODIAS and is available as open source to enable its adoption by the strong European Earth Observation downstream services industry.

Polar

Use Case

The Polar Regions play a critical role in regulating and driving the Earth's climate and ecological systems, and are currently experiencing significant change. New economic opportunities are resulting in increased attention and vessel traffic, leading to growing global interest both politically and economically.

Identifying and investigating satellite imagery of sea ice is a complex and in some cases frustrating task for the sea ice analyst. As the seasons change, so do the reflectivity signals coming from the ice, meaning SAR imagery can look different from one month to the next. This is why MET Norway has dedicated sea ice analysts drawing our ice charts from the local knowledge built up and passed down through the years.

Automation of sea ice charting to reduce manual labour is an ultimate goal that has taken large footsteps in the right direction within the ExtremeEarth project. As part of the Polar Use Case we have developed a workflow towards taking satellite imagery, transferring it to the Polar Thematic Exploitation Platform (Polar TEP) and classifying the image(s) based on algorithms developed by our partners.

Deep

Learning

Polar

The Polar Use Case exploits time series of Sentinel 1 SAR images to explore deep learning processing (DL) architectures and algorithms to cope with the extreme analytics and big data challenges associated with sea ice monitoring. In this regard, sea ice monitoring refers to ice charting, i.e., classifying sea ice scenes into ice types. Typically, two classification tasks are explored: i) Binary classes; ice vs. water, and ii) Multiple classes; multiple ice types and water (i.e., leads and open water).

The Polar use case of ExtremeEarth implemented deep learning architectures that can extract reliable and accurate information about sea ice and polar phenomena from large scale datasets. During the project we used various methods to achieve our results: Supervised learning, Pixel-wise classification, Semi-supervised learning, Distributed training. These approaches have different results and can be used for different use cases.

Food Security

Although Deep Learning models proved their effectiveness for classifying agricultural areas, to achieve accurate crop type mapping, two main challenges have to be faced: (i) deal with Time Series of images acquired over different tiles that are characterized by different properties from the temporal view, (ii) handle a severely imbalanced classification problem. The proposed system architecture consists of four main steps: the optical pre-processing step, the training of the multitemporal deep learning model, the crop type maps production, and the crop type map update.

Our Deep Learning model is a multi-layer LSTM. Hence, compared with single-layer LSTM, the multi-layer LSTM has a stronger ability to model the information provided by time series data, even though it requires a high computational burden for training due to the complex structure of the LSTM memory cell. In greater detail, the model used is a Long-Short Term Memory (LSTM) made up of three layers having 200, 125 and 100 hidden units for the first, second and third layer, respectively, a fully connected layer and a softmax layer which provides the classification result at pixel level.

ExtremeEarth

Infrastructure

The deep learning architectures and techniques discussed so far were implemented on Hopsworks, a horizontally scalable platform for Data Intensive Artificial Intelligence. Hopsworks brings support for scale-out AI with Earth Observation data from the Copernicus programme and the H2020 ExtremeEarth project.

Hopsworks provides an integrated platform for managing the entire lifecycle of data as well as developing machine learning applications and pipelines. In order to develop and put in production a machine learning model, the input data needs to be processed and transformed through a series of stages. Each stage serves a distinct purpose and all the stages chained together transform the input Earth observation data into an ML model that application clients can use. These stages are: Data ingestion and pre-processing, Feature Engineering and Feature Validation, Training, Model Analysis/Serving/Monitoring, and Orchestration.

In the ExtremeEarth project, deep analysis is done with the deep learning architectures using Hopsworks so that they scale to big Copernicus data. Hopsworks provides services to move the processing to the data and is based on a Cloud Computing Platform-as-a-Service approach.

Once the ExtremeEarth technologies were integrated in Hopsworks, they were deployed in the two TEPs over CREODIAS. Copernicus data is available in the same environment and was used to develop the ExtremeEarth technologies.

Linked Data

Tools

The result of the deep learning techniques for satellite image analysis are geospatial information, encoding knowledge about the domain of each of the two use cases of ExtremeEarth. ExtremeEarth follows the linked data paradigm in order to allow users to extract the expected value from this knowledge by accessing semantic information, interlinking this information with other available open linked data sources and publishing this knowledge in order to be reused.

GeoTriples-spark supports the automatic transformation of geospatial data from various formats into RDF using Semantic Web standards. GeoTriples-spark was deployed on HOPS platform, enabling users to perform transformation of big geospatial data into RDF at the extreme scales of the Copernicus paradigm.

JedAI-spatial is based on the meta-blocking technique for entity resolution with the ability to discover geospatial relationships among resources in geospatial RDF store as pioneered by UoA.

Strabon is an open-source spatiotemporal RDF store. In ExtremeEarth the new cloud-based version Strabo 2 was developed, aiming to scale to PBs of data in the HOPS platform.

SemaGrow is a data federation engine that facilitates the unified access of geospatial and relational data. Semagrow was adapted and extended and the resulting component was used to federate geospatial data sources residing in the new cloud-based Strabo 2 implementation and other geospatial data servers.

Training Datasets

In order to train our models we need large amounts of accurate and precise data. Satellite remote sensing is currentlly lacking such datasets and from an operational viewpoint it is not feasible to assume the availability of enough ground truth or annotated labeled data for training a deep network. In ExtremeEarth we developed very large training datasets for deep learning architectures, targeting the classification of Sentinel images for the Polar and Food Security use cases.

To face the lack of labeled training databases in the Food Security use case, publicly available thematic products at the country level were used to generate a large set of weak reference data. We considered the Austrian crop type map, whose official name is INVEKOS Schläge, which is based on the farmer declarations (for the crop types) and the administrative Geographic Information System (GIS) for the field geometry.

For the Polar use case, we focused on high-resolution mapping from SAR, using Sentinel-1 data as our primary source of data. However, auxiliary data from other high-resolution SAR satellites (TerraSAR-X, Radarsat 2), and/or co-located optical data (Sentinel-2&3, Landsat) may support the creation of the training database. The task of creating a training database for sea ice classification has been approached from two angles: (1) Expert analysis of Sentinel-1 with near-simultaneous optical data from Sentinel-2&3, covering the European Arctic and (2) Active learning using data from Belgica Bank based solely on Sentinel-1 data.

Copyright AI TEAM 2019